Incidents

Provider leaks, exposed chats, agent failures, and operational lessons.

3LS research desk

Incidents, control models, and operating notes for security teams governing prompts, files, memory, OAuth grants, and agent tool actions.

Editorial filter

Does data leave?

Prompts, uploads, chat memory, and archives crossing provider or tool boundaries.

Does authority move?

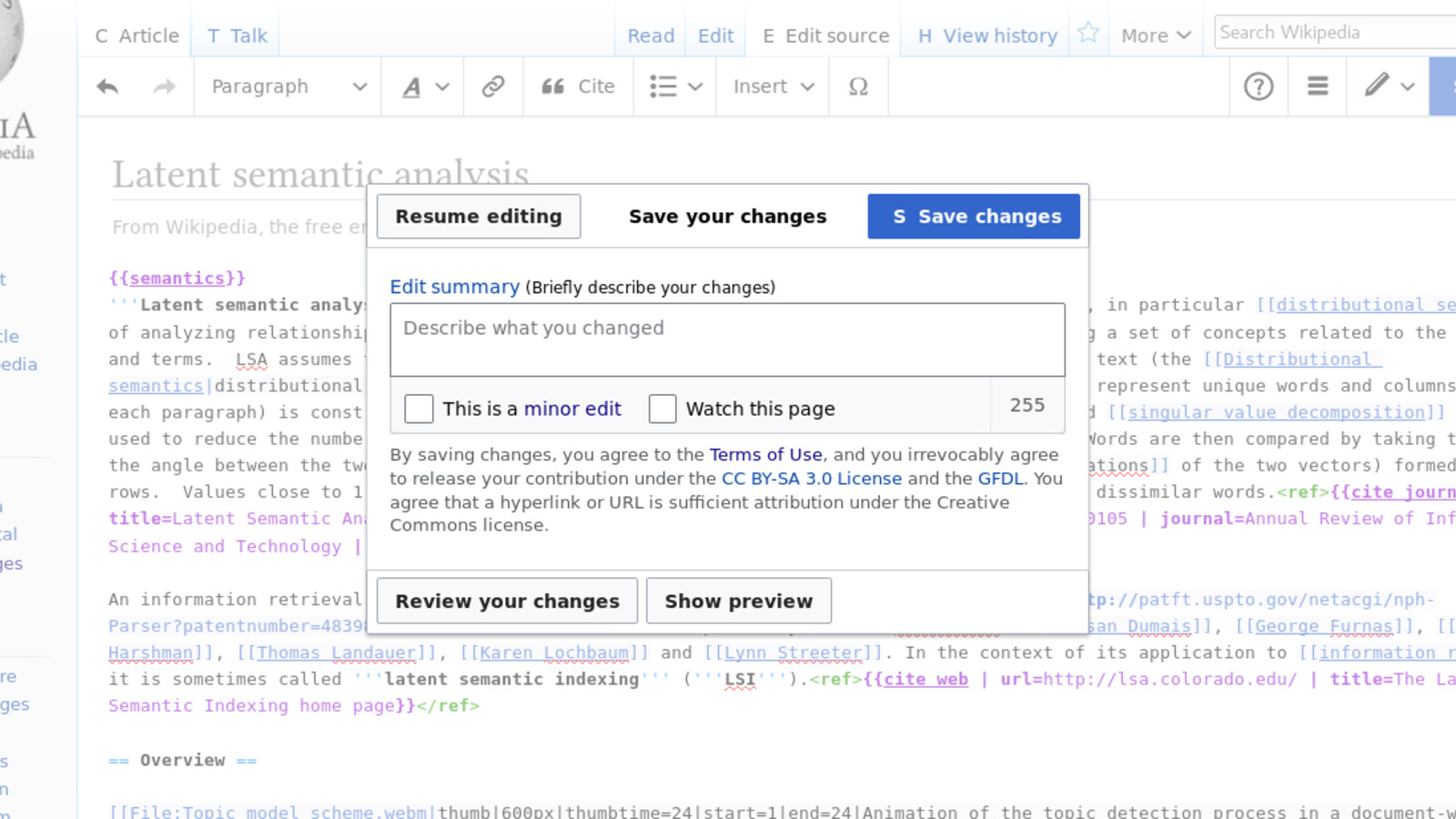

OAuth grants, MCP tools, browser agents, and code agents acting on behalf of users.

Provider leaks, exposed chats, agent failures, and operational lessons.

Policy, observability, and runtime decisions before data or authority leaves.

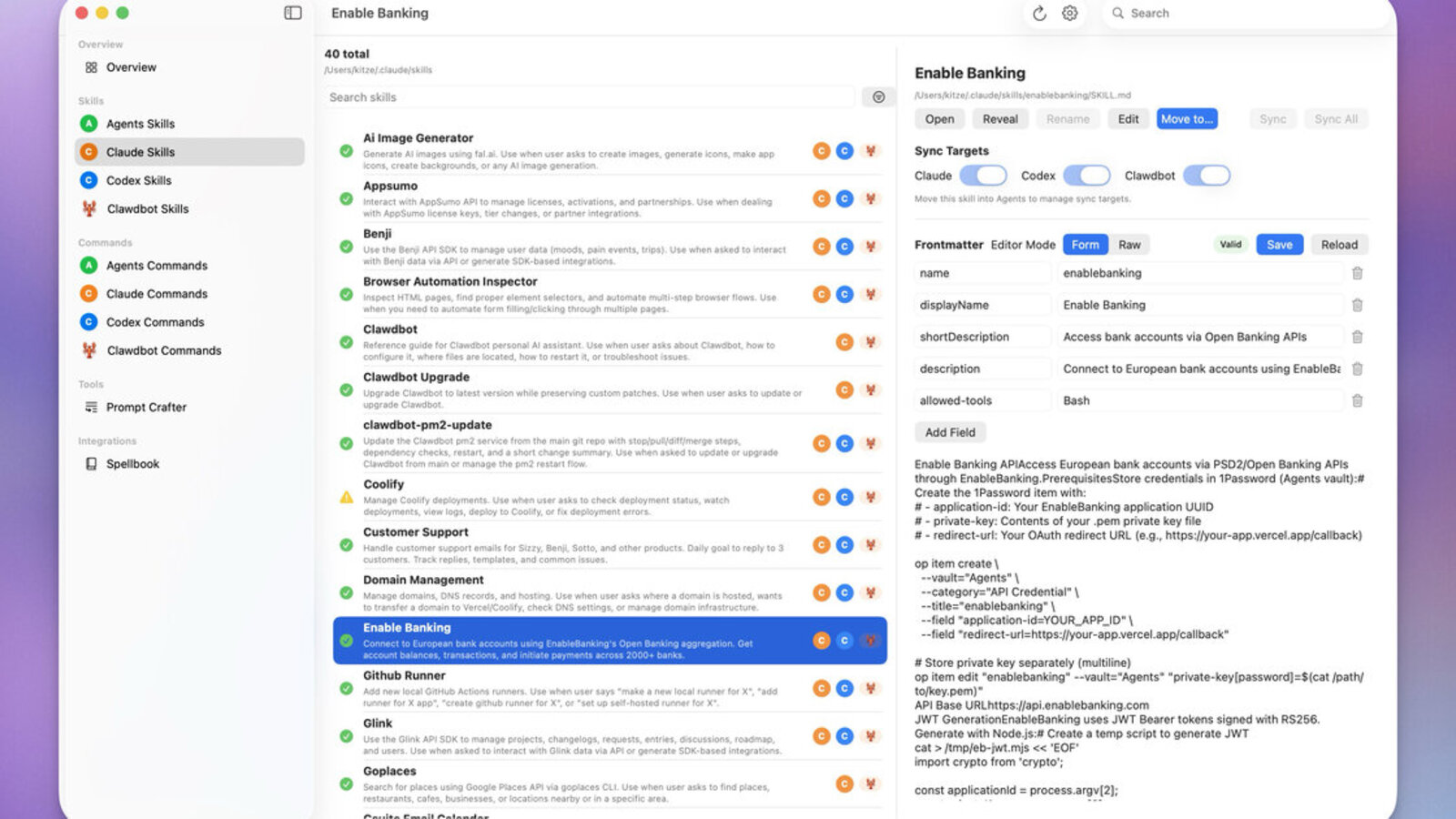

Browsers, MCP, extensions, coding agents, and delegated workflows.

Where prompts, files, memory, and archives become enterprise exposure paths.

Current briefs for AI security teams tracking data movement, tool authority, and runtime controls.

Microsoft's Teams SDK makes it easier to bring existing Slack bots, LangChain chains, and Azure AI Foundry agents into Teams. That turns Teams into an agent ingress point that needs runtime policy, visibility, and evidence.

Latest coverage across incidents, controls, and research

Get updates when we publish new research on emerging AI attacks, supply chain threats, and defense strategies.

No spam. Unsubscribe anytime.